The Difference Between Risk & Uncertainty

With the legendary success of firms like Renaissance or Citadel, complete with their army of PhDs, the pursuit of alpha is increasingly framed by the outside world as a search for high-dimensional pattern matching. Today, that search is dominated by the shadow of Geoffrey Hinton and the Deep Learning revolution.

In a world where AI is interrupting so many aspects of our personal and professional lives, the urge to use AI tools like Claude or Gemini to pick stocks and ETFs to deliver alpha is proving irresistible. The narrative is seductive: if a Large Language Model (LLM) can digest the internet to predict the next word in a sentence, surely it can digest a limit-order book to predict the next move on SPY and other popular ETFs.

Be aware though, this analogy conceals a mathematical trap. While LLMs and quantitative finance both inhabit the domain of conditional probability, they are separated by a chasm first described by Frank Knight (Risk, Uncertainty, and Profit - 1921), a sceptical liberal who mentored giants like Milton Friedman, George Stigler, and James Buchanan, then later weaponized by Benoit Mandelbrot.

LLMs deal with a simpler forecasting challenge that may not be up to the job when trying to outperform the market. The former deals with risk whereas investing in stocks and ETFs deals with uncertainty.

Recent history has shown the global macro-economy undergoes regime switching as the financial markets have lurched from one crisis to another. Think of the outbreak of the Covid pandemic around 11 Mar 2020, Trump's Liberation Day on 2 Apr 2025, and more recently the outbreak of war in The Middle East on 28 Feb 2026. As new regimes were ushered in, many statistical properties of stocks, bonds and commodities noticeably changed after those dramatic events, causing many asset allocation models to be mis-aligned to the new situation investors were facing.

Hinton's Cooperative Universe vs. The Adversarial World of Investing

Geoffrey Hinton's, the Godfather of AI, breakthrough with the LLM Transformer architecture relies on a fundamental assumption: the data reflects a stable reality. Human language, though complex, is a cooperative system. If a Transformer identifies that the word bark is followed by dog, it has captured a semantic law that is unlikely to change tomorrow. Put another way, the manifold of language is high-dimensional, but it is largely stationary.

In the quant world, we deal with a less forgiving arena of adversarial market participants. What's in their best interest is almost always not in your best interest. When a signal is identified, the very act of trading on it - what Donald MacKenzie calls Performativity - alters the underlying probability distribution. In the language of stochastic calculus, we are not just observing a process; we are shifting the measure. While an LLM addresses a static puzzle, an investor is trying to solve a puzzle that rearranges itself specifically to prevent being solved.

Mandelbrot's Warning: The End of Simplicity

Historically, it was on the 'sell side' that stochastic calculus was commonly used by many large firms to put a fair value on and risk manage the structured products and other option based derivatives products. Invariably the models built relied on very sophisticated mathematics as trading desks looked to manage the positions they held with their institutional counterparts. Nonetheless, most derivative models pricing clung to the wreckage of Gaussian stationarity where it is assumed that if you observe the S&P 500 for long enough, the sample mean will converge to the population mean. While mathematicians might know that as the Ergodicity Axiom, us mere mortals recognize this as the property that the range and volatility of an ETF's monthly returns can vary through time.

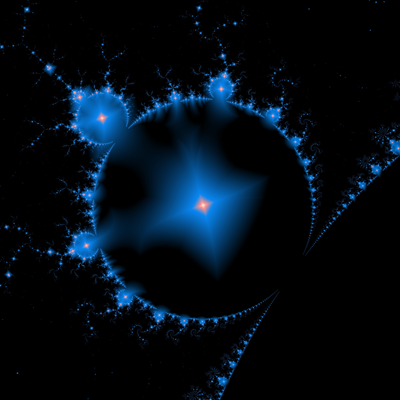

Figure 1 – The Mandelbrot Reality: Visualizing price action as a fractal process rather than a stable Gaussian distribution.

Figure 1 – The Mandelbrot Reality: Visualizing price action as a fractal process rather than a stable Gaussian distribution.

Benoit Mandelbrot's study of price action was the first shot across the bow for this theory. He demonstrated that financial returns are often Lévy-stable processes with infinite variance - they are fractal and non-ergodic. While that might sound too technical to be of interest, it is telling us what seasoned investors already know – expecting the unexpected, the market will behave in any way it likes and that whatever you thought about value stocks might be out of date by the time you put your position on. Stock returns are not only random, but the challenge of modelling that randomness requires much more mathematical machinery than is accessible to the mainstream.

Yet again, we need to differentiate between the magic that LLMs appear to weave and the unreliability that comes with investing: LLMs are essentially interpolative engines; they work within the known boundaries of their training data. But markets are extrapolative. A regime switch, like the 2020 COVID-19 liquidity vacuum, resulted in a structural break where the previous 10,000,000,000,000 data points of training become mathematically irrelevant.

Three Key Metrics to Monitor

To bridge the gap between intuition and execution, we must quantify the degree of non-stationarity, and to keep track of that for any ETF, we propose calculating a Non-Stationarity Score (NSS), that as a composite index looks to measure statistical decay across three critical dimensions:

Volatility of Volatility (VoV) – the Kurtosis of Risk

While standard volatility tells us the size of the swings of an asset, Vol of Vol measures the instability of the risk regime itself. In a Black-Scholes model, volatility is a constant. In a non-stationary world, it is itself a stochastic process. High Vol of Vol is the hallmark of so-called jump-diffusion - where in these more sophisticated stochastic processes the probability of a Black Swan event is not a static tail-risk, but a dynamic variable that can spike overnight.

Kullback-Leibler Divergence – Semantic Drift

In LLMs, we measure how far a model's distribution strayed from the target. In finance, we can use KL Divergence to measure distributional decay. By comparing the return distribution of the last 20 days against the previous 250 days, one calculates a spike in KL Divergence as the mathematical smoking gun of a regime switch. It tells the manager that the grammar of the current month is no longer written in the same language as the previous year.

The Hurst Exponent – Memory and Persistence

Mandelbrot popularized the Hurst Exponent, H, as a measure of long-term dependency. While a stationary random walk maintains H = 0.5, non-stationary ETFs exhibit Hurst Drift. When H moves toward 0.7, the market is in a persistent, trending regime; when it collapses below 0.4, it is mean-reverting. An ETF with high non-stationarity score is one where the Hurst Exponent itself is volatile, indicating a system that cannot decide on its own physics.

Figure 2 – Capturing Alpha: Monitoring the transition points between stationary risk and Knightian uncertainty.

Figure 2 – Capturing Alpha: Monitoring the transition points between stationary risk and Knightian uncertainty.

Don't Confuse Your Inputs for Your Outputs

In the tradition of Friedrich Hayek and later the Efficient Market Hypothesis (EMH), the market is a distributed computing engine. The input is the firehose of exogenous and endogenous data: interest rate pivots, supply chain disruptions, geopolitical shocks, and even the internal state of market participants, think margin calls and rebalancing mandates.

The closing price is the output of the matching engine: the final coordinate where the supply of shares meets the demand for shares at a specific millisecond. Mathematically, it is the aggregation and realized part of the random steps of a stochastic process at any current point of observation.

Think about how an LLM works. When you ask it a question, the output is a word (a token). But that word isn't the intelligence of the model; the intelligence is the Latent Space (embeddings) of the high-dimensional vector math happening behind the scenes.

- In AI the Latent Vector is the input/state; the Token is the output.

- In Finance the Market Regime (volatility, liquidity, sentiment) is the state; the Closing Price is the token.

If you only forecast using closing prices (the tokens), you are ignoring the semantic structure of the market that generated that price. This is why Price Action trading often fails during regime shifts; the price looks the same, but the underlying latent vector has rotated.

What about the world of Factor Investing?

If you view price as an input, you fall into the trap of autoregression. You assume the price today explains the price tomorrow. In a non-stationary world, this is dangerous because of reflexivity. As George Soros argued, the output (the price) can loop back and become an input for the next period by changing participant behaviour.

The quant view is that we don't forecast price, we instead forecast the probability distribution of the state variables (the Greeks, the Heatmap, the Order Flow). If we get the state right, the price (the output) follows as a mathematical necessity.

If you treat price as the output, then non-stationarity isn't just that the price is moving weirdly. It means the generating function has changed. This contrasts with stationarity where the machine produces different outputs, but the machine itself stays the same, unlike non-stationary where it's as if the machine has been replaced.

Once one accepts the stylized fact that ETF returns exhibit non-stationarity, then many of the tried and tested truisms of investing need re-visiting. One has only to think of the many fundamental variables used by fund selectors, such as P/E ratio, to see why such an approach might be likened to the Pre-Copernicus approach to studying planetary motion, that is doomed to failure by virtue of ignoring the facts. The terms used in the P/E ratio operate on different time frequencies and need to be re-thought as an asset lives through a regime shift.

Moving on to the notion of how the idea of Factor Investing sits alongside a world of ever shifting probability distributions, let's think about the Momentum, Value and Quality factors.

Momentum Factor: The Autoregressive Feedback Loop

In an LLM, a hallucination occurs when the model gets caught in a repetitive loop, where the probability of the next token is overwhelmingly dictated by the previous token. Momentum is the financial equivalent of this autoregressive state.

- The Output Mechanism: In a Momentum regime, the price is no longer an output of new information; it is an output of the previous price. This is a self-referential state where the attention of the market is fixed on the trend itself.

- The Non-Stationary Risk: Momentum is inherently non-stationary because it relies on a constant inflow of capital to maintain the drift. When the attention of the crowd shifts, the Hurst Exponent might collapse from 0.7 to 0.2 almost instantly. This is the momentum crash, a classic structural break.

Value Factor: The Mean-Reverting Elastic

Value is the market's attempt at error correction. If we view price as the output, then value is the signal that the output has strayed too far from the latent fundamentals, i.e. the earnings, and the book value.

- The Output Mechanism: Price formation in a value regime is driven by mean-reversion math. The market is attending to the discrepancy between the current token and its historical baseline.

- The Non-Stationary Risk: Value suffers from the stationarity trap. Quants often assume the mean is fixed. However, in a Mandelbrot world, the mean itself can shift. A value stock can become a value trap because the market has switched to a new regime where the old valuation grammar is simply no longer relevant.

Quality Factor: The Low-Entropy Signal

Quality (High ROE, Low Debt) is the quest for stationarity. Investors pay a premium for quality because they are looking for low-entropy outputs. They want a price process where the drift is predictable, and the jumps are rare.

- The Output Mechanism: The price of a quality ETF (for example iShares MSCI USA Quality Factor ETF) is an output of confidence. The market weights these assets more heavily when the global Non-Stationarity Score is high.

- The Non-Stationary Risk: Because quality is a crowded trade during periods of uncertainty, it is susceptible to liquidity shocks. When everyone tries to exit the stable asset at once, the stationary asset suddenly exhibits the most violent non-stationary jump.

Factor premiums are simply the compensation for taking on regime-switching risk. You don't get paid for buying value; you get paid for the risk that the value regime will never return to the mean. You don't get paid for momentum; you get paid for the risk of the structural break. By ranking ETFs using the NSS, you are effectively measuring which factor's probability manifold is starting to decay, and which is becoming the dominant grammar of the market.

Figure 3 – The Logic of Jumps: Identifying non-stationary 'tokens' in the market before the latent vector rotates.

Figure 3 – The Logic of Jumps: Identifying non-stationary 'tokens' in the market before the latent vector rotates.

The Factor Label vs. The Latent Driver

When a commentator calls an ETF a Value ETF, they are looking at the input (the accounting ratios). When we look at it, we are looking at the output (the price formation grammar).

- The Commentator's View (Static): "This ETF holds stocks with low P/E ratios; therefore, it is a Value ETF."

- The Quant's View (Dynamic): "This ETF currently has a price formation dominated by mean-reversion and low-growth expectations. However, if the market's 'attention' shifts, these same stocks may start exhibiting Momentum or Quality characteristics."

The Style Drift as a Regime Switch

In a non-stationary world, an ETF can drift out of its factor category without changing a single holding. If a value stock suddenly catches a thematic wave (like an energy stock during a supply shock), its price formation switches from mean-reversion (value) to autoregressive persistence (momentum).

If your model still treats it as value, you will sell too early, because you are using the wrong grammar to forecast the output.

Treating Factors as Regimes, Not as Buckets

Factors should be viewed as attractors in a high-dimensional space. An ETF's returns are a stochastic process that is constantly being pulled toward different factor attractors.

- In a Stationary World: An ETF stays in its bucket.

- In our Mandelbrot World: The ETF is a point on a manifold. One month it is quality, the next it is volatility. This is why factor timing is so difficult - the non-stationarity of the market causes the very definition of the factor to mutate.

The Semantic Shift of Quality and Value

Consider the quality factor. In a low-interest-rate regime, quality might be defined by high growth and high margins (Tech). In a high-inflation regime, quality might shift to mean pricing power and physical assets (commodities).

If you are a manager at a firm like Citadel, you aren't looking for value stocks. You are looking for the moment of transition - the point where the KL Divergence of an ETF's returns suggests it is moving from one factor regime to another.

You can argue that the value label is a lagging indicator. By the time the commentators call it a value stock, the market has already finished the value price formation and is moving into something else. The Non-Stationarity Score captures this factor decay in real-time.

In the retail narrative, factors like value and quality are treated as static identities - labels pinned to an ETF's lapel. But in a non-stationary framework, these are merely transient semantic regimes. Just as an LLM realizes that the word apple shifts from a fruit to a technology giant based on the surrounding tokens, a sophisticated model must realize that an ETF's identity shifts as the market's attention rotates. A value stock is only a value stock as long as the market's output grammar remains mean-reverting. The moment it breaks into a jump-diffusion trend, the old labels become a liability.

The NSS ranking doesn't just rank ETFs; it ranks the stability of their factor attribution. Low NSS: The ETF is behaving according to its label (stationary factor), whereas with a high NSS the ETF is undergoing a factor metamorphosis and non-stationary transition.

The Tale of Two Calibrations

Imagine a portfolio manager in 2008. Their LLM-style predictive model had been trained on the Great Moderation. When the crisis hit, the conditional probability of the observed moves was calculated as a 20-sigma event which is mathematically nigh on impossible.

The failure wasn't in the model's intelligence - it was in the assumption of stationarity. The model was looking at the grammar of a calm sea while the tectonic plates had already shifted. This is why the Hamilton Filter (regime-switching) and the Girsanov Bridge (shifting between the real-world and risk-neutral measures) are more vital to survival than any neural network's depth.

A sophisticated manager should plot ETFs not just on a risk/reward axis, but on a stationarity/entropy axis:

- Low NSS – stationary beta: Assets where the Hurst Exponent is stable and KL Divergence is near zero. These are safe for traditional mean-variance optimization and leveraged structured products.

- High NSS - the alpha frontier: Assets like ARKK or commodity ETFs during supply shocks. Here, the loss function of a standard model will explode. The opportunity lies in Bayesian online learning - using LLM-style attention to identify which past regime most closely resembles the current flux and weighting that data accordingly.

Conclusion: The Architect vs. The Alchemist

Geoff Hinton gave us the ultimate architecture for a static world. But as Mandelbrot taught us, the market is not a library; it is a storm. The future of quantitative management lies in the synthesis: using the attention mechanisms of the AI revolution to identify which regime of the Mandelbrot world we have just entered.

The alpha isn't in the prediction; it's in the calibration. In a world of Knightian uncertainty, the most valuable model is the one that knows when to stop believing its own training data.

Most investors only look at returns and volatility, but you should supplement this with a third dimension: system stability (stationarity). On that basis we can now talk in terms of two strategies covering the two NSS regimes.

Strategy A - The Anchor Portfolio (Low NSS)

In periods of global macro uncertainty, a manager might tilt toward ETFs with the lowest NSS. These are assets where the math still works. They are predictable, making them better candidates for leveraged positions or structured products where the cost of a Black Swan event is terminal.

Strategy B - The Convexity Play (High NSS)

If a manager is seeking alpha, they might look for ETFs that are entering a period of high non-stationarity. This is where jumps and regime switches happen. While harder to model, these are the environments where traditional models fail, creating mispricing opportunities for those using more adaptive, LLM-style attention models.

Alpha isn't found in predicting the next price in a stationary world; it's found in identifying that the world has changed before everyone else's models have finished recalculating.

Until next time.

Allan Lane